Apr 22, 2009

Paving the Bare Spots and Following the Guidon

$1M buys ten developers for a year at a burdened salary of $100K each. That is two small agile development teams or one large one, and a year is 24 biweekly sprints. That may seem like small potatoes to SDLC advocates, but agile teams can pull off amazing things in a year and 24 sprints leaves lots of opportunities for redirection and convergence. Question is, how could such teams be coordinated so that they converge over time on something useful at larger scales (where large means government- or at least agency-wide).

Although it may seem wasteful to launch projects without the usual heavy planning, notice that there are no billion- or even million dollar projects at risk. There is just the risk of a single sprint, about $1M/24; a little over $40K. Performance is easily monitored during each sprint review so non-performing projects can be terminated or redirected quickly. And perhaps best of all, costs are so affordable that all agencies could participate. There is no approval process that would prevent some agencies from improving their performance through information technology.

Let's assume that central management is commensurate with the small ($1M) project sizes and consider how to accomplish the most at the periphery with minimum management at the center. Two notions seem productive:

Paving the Bare Spots: This is explained in this paper (pdf) from just after my time with the architecture team for DISA's Netcentric Core Enterprise Systems (NCES). The title refers to a novel way of keeping students off the grass once "keep off the grass" signs have been tried and failed (as they always seem to do). The new approach is to just let the students walk where they wish and paving the bare spots behind them. Works every time.

How might that apply to the president's agenda of radically improving agency performance? See the paper for details, but in short, it involves a digital "enterprise space" modeled after some standards bodies and especially the Apache Software Foundation. This space is what you'd expect of such organizations; a download area for ready to use software (and someday "trusted components" as explained elsewhere on this blog), a source code repository for sharing code, a wiki or similar for sharing ideas, and so forth. And there's a governing body that helps coordinate the work (as distinct from managing it; management is largely handled by each team) and accepts long-term ownership of the results.

Follow the Guidon: This is based on the role the flag-bearer once played in military formations. Troops were taught to follow the guidon. They were free to exploit local tacit knowledge, route around obstacles, duck and cover if need be, yet converge on the goal set by management. The key thing here is that it was apparent to all that the leadership (or at least their flag bearer!) was out there in front, leading the way and assuring that everyone converged on the goal.

A guidon is just a flag on a stick; a lightweight and easy to follow governance model if there ever was one! The digital equivalent can be seen in the paper, in the governing body and the behind-the-scenes work of establishing enterprise standards and reference implementations of the same.

Apr 18, 2009

Mud Brick Architecture and FEA/DoDAF

Like "service" and before that "object", architecture was borrowed from the tangible context of tangible construction and applied to intangible information systems without defining its new meaning in this foreign digital context. This blog returns the term to its historical context in order to mine that context for lessons that might apply to information systems. The (notional) historical context I'll be using is outlined by the following terms:

- Real-brick architecture is the modern approach to construction. It leverages trusted building materials (bricks, steel beams, etc) that are not available directly from nature. In other words, real-brick components are usually not available for free. They are commercial items provided by other members of society in exchange for a fee. The important word in that definition is trusted, which is ultimately based on past experience with that component, scientific testing and certification as fit for use. This is an elaborately collaborative approach that seems to be a uniquely human discovery, especially in its reliance on economic exchange for collaborative work.

- Mud-brick architecture is the primitive approach. Building materials (bricks) are made by each construction crew from raw materials (mud) found on or near the building site. Although the materials are free and might or might not be good enough, mud brick architecture is almost obsolete today because mud bricks can not be trusted by their stakeholders. Their properties depend entirely on whoever made them and the quality of the raw materials from that specific construction site. Only the brick makers have this knowledge. Without testing and certification, the home owner, their mortgage broker, the safety inspector, and future buyers have no way of knowing if those mud bricks are really safe.

- Pre-brick architecture is the pre-historic (cave man) approach. This stage predates the use of components in construction at all. Construction begins with some monolithic whole (a mountain for example), then living space is created by removing whatever isn't needed. This context is actually still quite important today. Java software is still built by piling up jar files into a monolithic class path and letting the class loader remove whatever isn't needed. Only newer modularity technologies like OSGI break from this mold.

The pre-brick example mainly relies on such subtractive approaches but additive approaches were used too. For example, mud and wattle construction involves daubing mud onto a wicker frame. I apologize to advocates of green architecture where pre-modern construction is enjoying a well-deserved resurgence. I chose these terms to evoke an evolution in construction techniques that the software industry could do well to emulate, not to disparage green architectural techniques (post-brick?) in any way.

Federal Enterprise Architecture and DoDAF

So how does this relate to the enterprise architecture movement in government or DoDAF in particular? The interest in these terms stems from congressional alarm at the ever-growing encroachment of information technology expenses on the national budget and disappointing returns from such investments. These and other triggering factors (like the Enron debacle) led to the Clinger-Cohen act and other measures designed to give congress and other stakeholders a line of sight into how the money was spent and how much performance improved as a result. The Office of Management and Budget (OMB) is now responsible for ensuring that all projects (>$1M) provide this line of sight. The Federal Enterprise Architecture (FEA) is one of their main tools for doing this government-wide. The Department of Defense Architecture Framework (DoDAF) is a closely related tool largely used by DOD.

I won't summarize this further because that is readily available at the above link. My goal here is to focus attention on what is missing from these initiatives. Their focus is on providing a framework for describing the processes a project manager will follow to achieve the performance objectives that congress expects from providing the money to fund the project. To return to the home construction example, congress is the mortgage broker with money that agencies compete for in budget proposals. Government agencies are the aspiring home owners who need that money to build better digital living spaces that might improve their productivity or deliver similar benefits of interest to congress. Each agency hires an architect to prepare an architecture in accord with the FEA guidelines. Such architectures specify what, not how. They provide a sufficiently detailed floor plan that congress can determine the benefits expected (number of rooms, etc), the cost, and the performance improvements that might result. They also provide assurances that approved building processes will be followed, typically SDLC (the much-maligned "waterfall" model). What's missing is after the jump.

What's Missing? Trusted Components

What's missing is the millennia of experience embodied in the distinction between real-brick, mud-brick, and pre-brick architectures. All of them could meet the same functional requirements; the same floor plan (benefits) and performance improvements. The differences are in non-functional requirements such as security (will the walls hold up over time?) and interoperability (do they offer standard interfaces for roads, power, sewer, etc). Any home-buyer knows that non-functional requirements are not "mere implementation details" that can be left to the builder to decide after the papers are all signed. That kind of "how" is the vast differences between a modern home and a mud-brick or mud and wattle hut, which are obviously of great interest to the buyer and their financial backers. This difference is what is omitted during the closing decision by the "what not how" orientation of the FEA process.

So let's turn to some specific examples of what is needed to adopt real-brick architectures for large government projects. It turns out that all the ingredients are available in various places, but have not yet been integrated into a coherent approach:

- Multigranular Cooperative Practices: This is my obligatory warning against SOA blindness, the belief that SOA is the only level of granularity that we need. But just as cities are made of houses, houses are made of bricks, and bricks are made of clay and sand, enterprise systems require many levels of granularity too. Although SOA standards are fine for inter-city granularity, there is no consensus on how or even whether to support inter-brick granularity; techniques for composing SOA services from anything larger what than Java class libraries support. The only such effort I know of is OSGI but this seems to have had almost no impact in DOD.

- Consensus Standards: although much work remains, this is the strongest leg we have to stand on as broad-based consensus standards are the foundation for all else. However standards alone are necessary, not sufficient. Notice that pre-brick architecture is architecture without standards. Mud-brick architectures are based on standards (standard brick sizes, for example), but minus "trust" (testing, certification, etc). Real brick architectures involve wrestling both standards and trust to the ground.

- Competing Trusted Implementations: The main gaps today are in this area so I'll expand on these below:

- Building Codes and Practices: bricks alone are necessary but insufficient. Building codes specify approved practices for assembling bricks to make buildings. This is almost virgin territory today for an industry that is still struggling to define standard bricks.

- Construction Patterns and Beyond: This alludes to Christopher Alexander's work on patterns in architecture and to the widespread adoption of the phrase in software engineering. It has since surfaced as a key concept in DOD's Technical Reference Model (TRM) which has adopted Gartner Group's term, "bricks and patterns". However, this emphasizes the difficulty of transitioning from pre-brick to real-brick architectures. Gartner uses "brick" to mean a SOA service that can be reused to build other services or a standard. That is not all all how I use that term. A brick is a concrete sub-SOA component that can be composed with related components to create a secure SOA service, just as modern houses are composed of bricks. True, standards help by specifing the necessary interfaces, but they are never confused with bricks which only implement or comply with that standard. Standards are abstract; bricks are concrete. They exist in entirely different worlds; one mental, the other physical.

Implementations of consensus standards is rarely a problem; the problem is that they're either not competing or not trusted. For example, SOA security is a requirement of each and every one of the SOA services that will be needed. This requirement is addressed (albeit confusingly and verbosely, but that's inevitable with consensus standards) by the WS-Security and Liberty Alliance standards. And those standards are implemented by almost every middle ware vendor's access management products, including Microsoft, Sun, Computer Associates and others.

Trusted implementations are not as robust but the road ahead is at least clear, albeit clumsy and expensive today. The absence of support for strong modularity (ala OSGI) in tools such as Java doesn't help, since changes in low-level dependencies (libraries) can and will invalidate the trust in everything that depends on them. Sun claims to have submitted OpenSSO for Common Criteria accreditation at EAL3 last fall (as I recall), and I heard that Boeing has something similar planned for its proprietary solution. I've not tracked the other vendors as closely but expect they all have similar goals.

Competing trusted implementations is a different matter that may well take years to resolve. Becoming the sole-source vendor of a trusted implementation is every vendor's goal because they can leverage that trust to almost any degree, generally at the buyer's expense. Real bricks are inexpensive because they are available from many vendors that compete for the lowest price.

Open Source Software

In view of the importance of the open source movement in industry, its growing adoption in government, its important to point out why it doesn't appear in the above list of critical changes. What matters to the enterprise is that there be competing trusted implementations of consensus standards, not what business model was used to produce those components.

- Trust implies a degree of encapsulation that open source doesn't provide. Trust seems to imply some kind of "Warranty void if opened" restrictions, at least in every context I've considered.

- The cost and expense of achieving the trusted label (the certification and accreditation process is NOT cheap) seems very hard for the open source business model to support.

- The difference between Microsoft Word (proprietary) and OpenOffice (open source) may loom large to programmers, but not to enterprise decision-makers more focused on whether it will perform all the functions their workers might need.

- Open Source may make more sense for smallest granularity components at the bottom of the hierarchy (mud and clay) that others assemble to make (often proprietary) larger granularity components.

Concrete Recommendations

Enough abstractions. Its time for some concrete suggestions as to how DOD might put them into use in the FEA/DoDAF context.

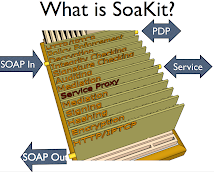

Beware of one-size-fits-all panacea solutions: SOA is great for horizontal integration of houses to build cities so that roads, sewers and power will interoperate But SOA is extremely poor at vertical integration, for composing houses from smaller components such as bricks. Composing SOA services from Java class libraries is mud and wattle construction which is not even as advanced as mud brick construction. One way to see this is in SOA security, for which standards exist as well as (somewhat) trusted implementations. SOA security can be factored into security features (access controls, confidentiality, integrity, non repudiation, mediation, etc) that can be handled either by monolithic solutions like OpenSSO or repackaged as pluggable components as in SoaKit. Yes, the same features can be packaged as SOA services. But nobody would tolerate the cost of parsing SOAP messages as they proceed through multiple SOA-based stages. Lightweight (sub-SOA) integration technologies like OSGI and SoaKit (based on OSGI plus lightweight threads and queues) would be ideal for this role and would add no performance cost at all.

Publish a approved list of competing trusted implementations: This doesn't mean to bless just one and call it done. That is a guaranteed path to proprietary lock-in. Both "trusted" and "competing" must be firm requirements. At the very least, trust must mean components that have passed stringent security and interoperability testing, and competing means more than one vendor's components must have made it through those tests.

Expose the use of government-approved components in FEA/DoDAF: These currently expose only what is to be constructed and its impact on agency performance to stakeholders, leaving how to be decided later. How is a major stakeholder issue that should be decided well before project funding, such as whether components from the government-approved list will be used to meet non-functional requirements such as security and interoperability. As a rule, functional requirements can be met through ad hoc construction techniques. Security and interoperability should never be met that way.

Leverage trusted components in the planning process: The current FEA/DoDAF process imposes laborious (expensive!) requirements that each of hundreds of SOA-based projects must meet. Each of those projects has similar if not identical non-functional requirements, particularly in universal areas like security and interoperability. If trusted components were used to meet those requirements, the cost of elaborating those requirements could be borne once and shared across hundreds of similar projects.

So what?

OMB's mandate to provide better oversight is likely to accomplish exactly that if it doesn't engender too much bottom-up resistance along the way. But to belabor an overworked Titanic analogy, that is like conentrating on auditing the captain's books when the real problem is to stop the ship from sinking.

The president's agenda isn't better oversight. That's someone else's derived goal which might or might not be a means to that end. The president's goal is to improve the performance of government agencies. Insofar as more reliable and cost-effective use of networked computers is a way of doing that, and since hardware is rarely an obstacle these days, the mainline priority is not more oversight but reducing software cost and risk. Better oversight is in a possibly necessary but definitely supporting role.

The best ideas I know for doing that are outlined in this blog. They've been proven by mature industries' millennia of experience against which software's 30-40 years is negligible.

Apr 7, 2009

Agile Enterprise Architecture? You bet!

The title of yesterday's post caused me to google "Agile Enterprise Architecture". And sure enough, others got there before me. Lots of them.

- Agile Enterprise Architecture AgileEA! is a free open source EA Operational Process. It is a framework that is designed to either use as is, or to tailor and publish your own Enterprise Architecture Operational Process.

- AgileEA Wiki Site wiki site for Agile EA Architecture.

- Eclipse EPF Composer is an ecliipse-based editor for building a new EA or refining an existing one.

The EPF EA Composer is only supported on Windows, RedHat/SUSE Linux and "perhaps others". I tried it on a Parallels Ubuntu VM inside MacOSX. It loaded fine, but crashed with a NPE on clicking the various models. Aparently there's a rich text component that isn't quite portable.

So I installed it on my Windows VM, and was frankly impressed. The Composer installed flawlessly (not usual with eclipse in my experience) and ran perfectly. It wasn't immediately obvious how to use it, but it comes with tutorials that brought it home quickly.

Better yet, there's a community developing EPF "plugins" (terrible name IMO). These aren't code, but architectural approaches, such as Scrum. For example, Eclipse has a plugin download page that allows their Scrum process model to be loaded into EPF. The same site also provides a version of this model published as HTML. That is produced by installing the Scrum library into EPF, editing it to suit local conventions, and publishing it as HTML.

In my own work with Scrum, we used wikis to convey our ever evolving conventions to each other and our stakeholders. Those were like communal legal pads that can record whatever people write there. But this unstructured approach means web pages become chaotic. And since navigational links between pages is up to each contributor, it becomes hard to find anything when the site grows over time.

EPF is more like a workbook. It starts with most of the text you need to define a Scrum methodology, such as descriptions of the key roles and responsibilities. But it allows these to be changed or extended with whatever is missing. All pages are automatically linked into a hierarchy so browsing is much easier. And the structure automatically distributes different roles to different sections, which itself helps to keep the structure intact.

One concern, possibly an inevitable one, is that that publishing to html implies a centrally planned approach, albeit one that might be ameliorated by using distributed development techniques such publishing the evolving model for distributed access via Subversion or similar. That is, the only ones empowered to contribute are EPF users with access to the enterprise model. Everyone else gets read-only access to the published (HTML) results, and cannot influence the architecture directly. This is probably inevitable, and arguably good enough for less agile deployments. A middle ground might also be workable, such as importing the published HTML as in some writable format such as a wiki, with a select few responsible for manually moving information from the wiki into the underlying model.

Apr 6, 2009

Enterprise Architecture and Agility?

Lately I've been doing a deep-dive into the Enterprise Architecture literature (a long-standing but largely latent interest). I've been struck by the fact that the EA process model is predominately top-down, a cycle that begins with getting buy-in/budget from top management, proceeding thru planing and doing and ending with measuring how well you did, before iterating the same cycle until done. Measuring is the last step in each cycle. Shouldn't it be (one of) the first?

Obviously the goal of any EA effort is making a lasting improvement to an organization. That means influencing decisions of people outside the EA team, who are by definition elsewhere in the organization. Centrally planned dictates from on high are notiorously ineffective at that, if only because effective planning relies on tacit local knowledge that is hard to acquire centrally. For the academically-minded, Friederick Hayek wrote at length about this in The Use of Knowledge in Society. For the more concrete-minded, the lack of tacit knowledge at the center is one of the main reasons that Russia's planned economy failed.

By contrast, the software development community has been shedding its centrally planned roots (the "waterfall model") in favor of Agile methodologies. Among other things these push decision-making as far down the hierarchy as possible. Can such approaches work higher up, not just for software development, but for EA? I'm not talking either-or but hybrid...getting top-down and bottom-up working together for improvement.

What about making the "last" step (metrics) one of the first? I.e. instead of spending the first iteration exclusively in the executive suite, what about devoting some of that first iteration to measuring (and *reporting*) on the as-is system. Up front, at the beginning, instead of waiting until the first round of improvements are in place. You'll need as-is numbers anyway to measure the improvement, so why not collect as-is performance numbers at the beginning, as one of the first steps of an EA effort?

The lesser and most obvious reason for collecting as-is metrics at the beginning is that you're going to need them at the end of the first iteration to know how well the changes actually worked. But the larger reason is that "decrease widget-making cost" is more understandable to folks on the factory floor to the more abstract goals of the executive suite, "increase market share" and the like.